It Started With a Button: The Agent at the End of the Universe (Part 1)

Somewhere around late 2023, a strange and powerful idea swept through the SaaS industry with the quiet inevitability of a tide, or possibly a plague. The idea was this: large language models could do things now. Useful things. Things that customers might pay for. And if you were running a software company and hadn't yet figured out what to do about it, you were (according to LinkedIn, at least) already dead.

At Hurree, an analytics platform that helps businesses make sense of their data, the conversation started the way these conversations always do. Someone said, "So... should we do something with AI?" in a meeting, and within forty-eight hours, it had a Jira ticket.

The question was never really should we? It was where on earth do we start?

This is the story of where we started. It's also the beginning of a longer series about how one cautious experiment eventually grew into something far more ambitious, and occasionally quite confusing. But we'll get to that.

The last mile problem (or why dashboards are lonely)

Here's a thing about dashboards that the analytics industry doesn't talk about enough: they are, fundamentally, a language. A rather beautiful one, if you're the sort of person who finds beauty in a well-constructed scatter plot (and there are more of us than you'd think). But like any language, it only works if your audience speaks it.

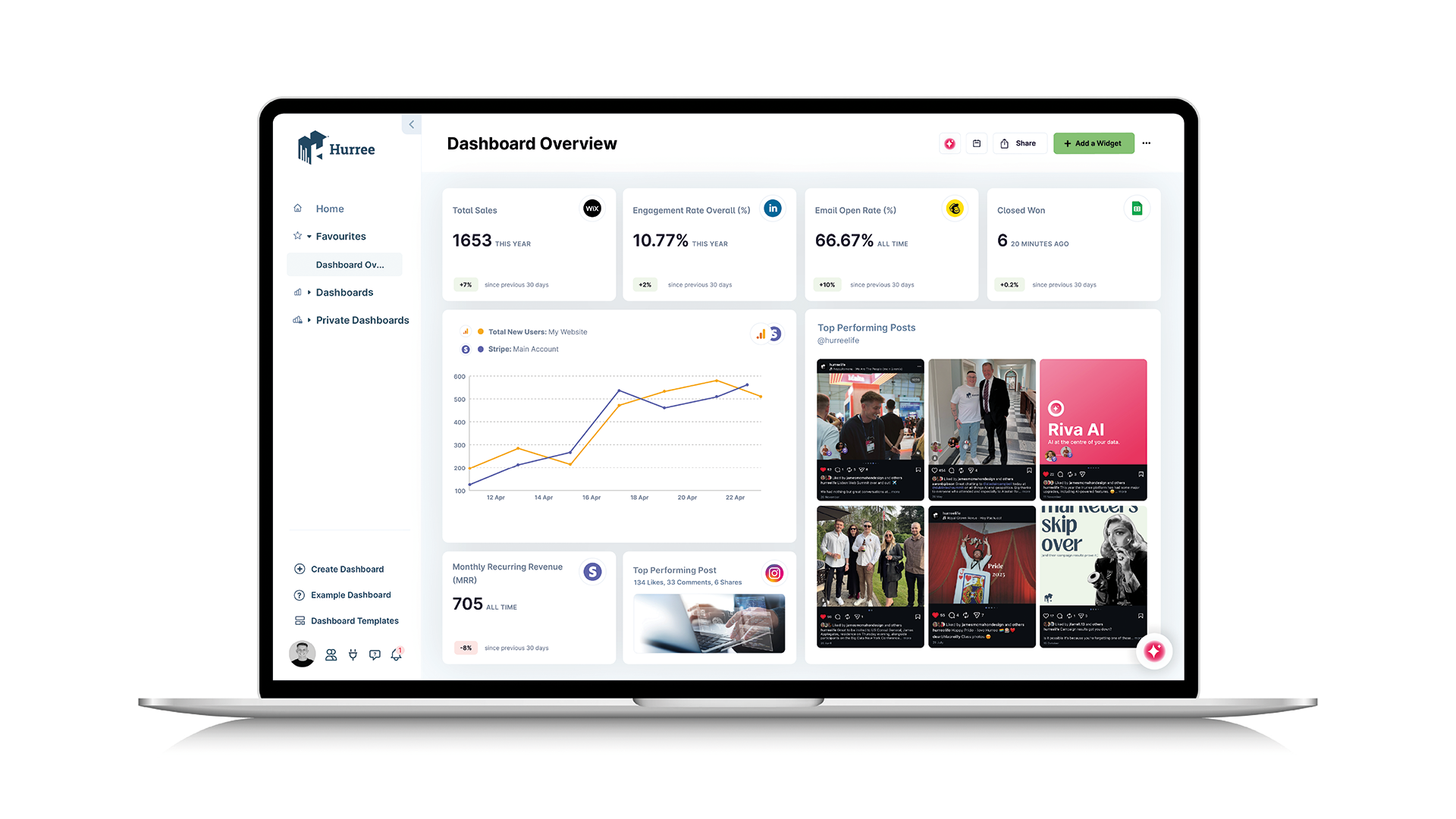

A well-built Hurree dashboard surfaces trends, highlights anomalies, and tells a story through data. The trouble is that story requires a reader who knows what they're looking at. Someone who understands that a spike in that metric is normal, but a dip in this one means something has gone horribly wrong. Someone who lives in the dashboard.

Now think about what happens when those insights need to travel beyond the people who live there. A marketing manager wants to share campaign performance with the C-suite. A product team needs to explain usage patterns to sales. The data is right there, beautifully visualized, completely incomprehensible to anyone who hasn't spent the last three months staring at it.

So what actually happens? Screenshots. Hastily typed bullet points in Slack. A paragraph in an email that attempts to translate twenty widgets into plain English, written by someone who would rather be doing almost anything else. The insight survives the journey in roughly the same condition as a sandwich survives a trip through airport security.

That was the problem we decided to solve first.

Riva Summarize

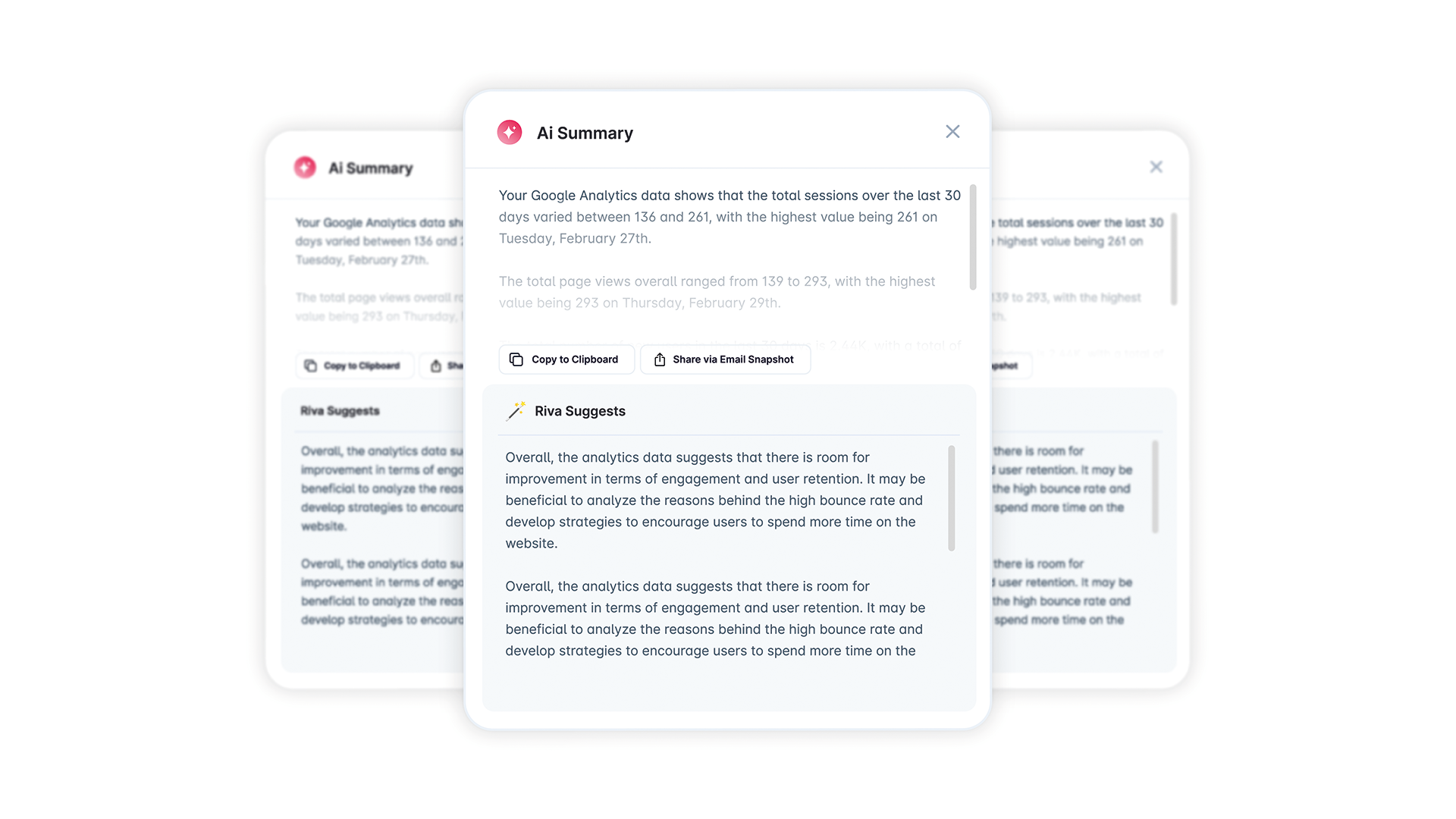

The idea, on the surface, was almost disappointingly simple: put a button on any Hurree dashboard that generates a plain-text summary of what the data actually means. Not a description of the charts (that would be both easy and pointless) but a genuine summary of the insights. The kind of thing a thoughtful analyst would write if they had the time, the inclination, and a strong cup of coffee.

Something you could paste into an email, drop into a report, or hand to someone who has never logged into the platform and never intends to. One button. Real, practical value.

We called it Riva Summarize. It was the first feature under what would eventually become Hurree's AI capability layer, and we kept it deliberately, almost aggressively simple. A prompt, an API call to OpenAI, a supporting Azure Function. No elaborate architecture. No six-month roadmap with milestone reviews. Ship it, learn from it, figure out what comes next.

"Simple" turned out to be a somewhat misleading word for what followed.

Teaching a machine what matters

A Hurree dashboard is not a single chart. It's more like an ecosystem. Multiple data sources, multiple visualisations, filters, date ranges, comparisons, and the accumulated intent of whoever built it, which is a polite way of saying it can get quite complex.

The naive approach to summarising one of these is exactly what you'd expect: scoop up all the data, throw it at a language model, and say "summarize this, please." The results are technically accurate in the same way that describing a symphony as "sounds happening in a particular order" is technically accurate. The model doesn't know what matters. It can't distinguish the 3% uptick that's background noise from the 3% dip that should have half the company on a call. It doesn't understand the relationships between widgets or what the user was trying to track when they built the dashboard in the first place.

So we built a serialisation layer. A translation engine, essentially, whose job was to take the sprawling, visual, context-rich world of a dashboard and distil it into something an AI could actually reason about. Structured, prioritized, and carrying enough context to produce a summary that a human would recognize as useful rather than merely correct.

This was the first big lesson, and it surprised us more than it probably should have: prompting is a business problem, not a technical one.

Getting the API call right was trivial. Any competent engineer can call OpenAI and get text back. But understanding what makes a dashboard summary useful (what a Hurree user would actually want to communicate, to whom, and for what purpose) required deep product thinking. Domain knowledge mattered as much as engineering skill. Possibly more. The serialisation layer wasn't glamorous work. Nobody was going to write a Medium post about it. But it was the difference between a feature that demos well and one that actually gets used.

The brain outside the body

The second decision that shaped everything after was architectural, and at the time, it felt almost too cautious.

We built Riva Summarize as an Azure Function. Completely separate from the core Hurree platform. A standalone, serverless microservice with no shared code, no shared deployment pipeline, and no opinions about what the platform was doing on any given Tuesday.

We could have embedded the AI logic directly into the platform. It would have been tidier in some ways. Fewer services, one deployment, a simpler diagram on the whiteboard. There were perfectly reasonable arguments for it.

But we chose isolation, and the reasoning went something like this: we wanted AI to function as a brain the platform could call on. Not a feature wired into its nervous system.

The analytics platform has its own roadmap, its own release cycle, its own hard-won stability. AI, in late 2023, was moving at a pace best described as "alarming." Models were improving monthly. Prompting best practices had the shelf life of a banana. We knew we'd want to iterate constantly, and if every prompt tweak or model upgrade meant a full platform build and redeploy, we'd either move too slowly on AI or accidentally destabilize a product that people relied on. Both options were, to use a technical term, bad.

Serverless isolation gave us a clean boundary. The platform sends data, gets intelligence back. The AI layer can be updated, swapped, rewritten, or set on fire and rebuilt from scratch without the platform knowing or caring. We could upgrade models on a Tuesday afternoon without anyone in platform engineering losing sleep over it.

This pattern (AI as an external brain, not an embedded organ) became the foundation for everything we built afterward. At the time, it felt like a sensible architectural choice. In hindsight, it was one of the best decisions we made. When the ambitions grew beyond a single summarisation feature, and they grew quite a lot, having that boundary already in place saved us enormous amounts of pain.

The towel-sized takeaways

If you're thinking about shipping your first AI feature, here's what Riva Summarize taught us, compressed into something you can carry with you:

- Start with a real workflow problem. Not "where can we sprinkle AI?" but "where is communication actually breaking down?" The best first AI feature isn't the most technically impressive. It's the one that removes friction from something people already do badly, and ideally do badly on a daily basis.

- Invest in the translation layer. The gap between your raw data and useful AI output is where all the hard product thinking lives. The API call is the easy bit. Teaching the model what actually matters requires understanding your users, your domain, and the difference between technically correct and genuinely helpful. That's where the real work is.

- Isolate like you mean it. Keep your AI capabilities in their own deployable, iterable, occasionally-on-fire space. Your core product has earned its stability. Your AI layer needs the freedom to move fast, fail cheaply, and evolve without dragging the rest of the codebase along for the ride.

What came next

Riva Summarize was a single feature. One button, one function, one very specific job. But it proved something that mattered quite a lot: AI could deliver genuine value inside the Hurree platform. Not as a gimmick, not as a checkbox on a feature comparison spreadsheet, but as a real capability that made the product better at its core job of helping people understand their data.

Which, naturally, raised a question. A dangerous question. The kind of question that leads to late nights, architectural diagrams on whiteboards, and a steadily growing collection of Azure Functions.

What else could the brain do?

The answer is where this series is headed. From summarisation to forecasting, from chatbots to something that starts to look genuinely agentic, the journey turned out to be far more interesting (and far messier) than anyone expected.

But that's a story for next time. Don't forget your towel.

Share this

You May Also Like

These Related Stories

Hurree Shortlisted for the 2024 National AI Awards

[Infographic] Artificial Intelligence & The Marketing Industry